The Phygital Hegemony: China's Strategic Consolidation of 3D AI and Spatial Entertainment Ecosystems

The Strategic Imperative of the Phygital Era

The global entertainment landscape is currently undergoing a structural transformation characterized by the convergence of the physical and digital realms, a phenomenon increasingly defined as the "Phygital" world.[1] This transition represents a fundamental departure from traditional two-dimensional media consumption toward immersive, three-dimensional interaction where the boundaries between real-world interactions and virtual simulations become indistinguishable.[1] At the center of this metamorphosis is China, a nation that has strategically positioned itself to dominate future entertainment technologies through an aggressive focus on 3D Artificial Intelligence (AI) and the rapid commercialization of explicit rendering techniques such as 3D and 4D Gaussian Splatting.[2, 3, 4]

China’s approach to cornering this market is not a product of isolated corporate innovations but is the result of a coordinated state-industry infrastructure designed to facilitate high-quality economic growth through technological "leapfrogging".[5, 6] The strategic framework underpinning this ambition is articulated in the "Next Generation Artificial Intelligence Development Plan," which sets a clear timeline for China to become the world’s primary AI innovation center by 2030.[5, 7] By 2026, the domestic AR and VR market is projected to reach $13.1 billion, growing at a compound annual growth rate (CAGR) of 43.8%, significantly outpacing the global average.[8] This growth is fueled by a pivot from building foundational models to expanding terminal applications, effectively commercializing AI for the masses.[9]

The divergence in strategy between China and the United States is particularly notable in the allocation of resources. While U.S. technology giants like Meta and Alphabet utilize massive "capital intensity," spending hundreds of billions of dollars on NVIDIA GPUs and energy-intensive data centers, Chinese firms are pursuing "efficiency intensity".[2] This model focuses on refining the software experience and optimizing algorithms to ensure that high-fidelity 3D content can be rendered in real-time on consumer-grade hardware, thereby bypassing the bottlenecks of computational scarcity.[2, 3] This efficiency allows Chinese companies to invest significantly less than their American counterparts—often one-fifth to one-sixth of the capital—while producing comparable or superior results in application-specific AI video generation and volumetric reconstruction.[2]

Economic Indicator

China (Efficiency Model)

United States (Capital Model)

Projected AR/VR Spending (2026)

$13.1 Billion [8]

$74.7 Billion (Global) [8]

AI R&D Investment Ratio

~1/6 of U.S. Investment [2]

High Intensity / GPU-heavy [2]

Market Focus

Terminal Applications & Efficiency

Foundational Infrastructure & Scale

Strategic Lead

70% of Global AI Patent Filings [10]

Majority of Granted/Enforceable Patents [10]

Algorithmic Primacy: The Rise of Gaussian Splatting

The technical pivot from implicit to explicit representation marks the most significant leap in digital content creation since the transition to digital cinematography.[11] For decades, the industry relied on triangle meshes, define objects as hollow surfaces of connected polygons.[12] While effective for static or pre-rendered scenes, meshes are computationally expensive and struggle with the complexity of real-world captures.[12] The emergence of Neural Radiance Fields (NeRFs) attempted to solve this by using deep neural networks to represent 3D scenes implicitly, but their reliance on complex inference resulted in slow rendering speeds and significant hardware requirements.[3, 11]

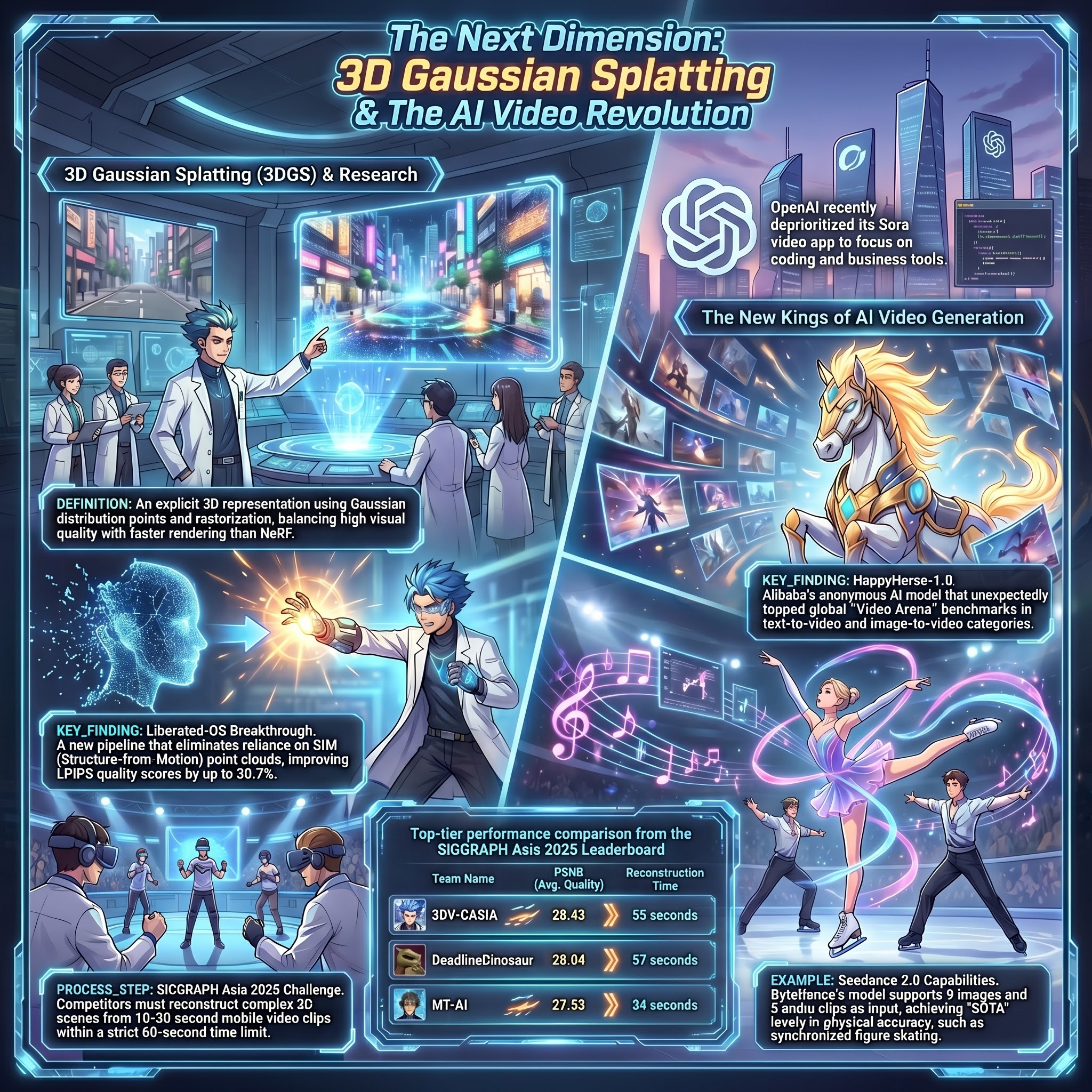

3D Gaussian Splatting (3DGS), introduced as an innovative alternative, uses semi-transparent ellipsoids, or "blobs," to form an image of a 3D scene.[12] Each ellipsoid encodes a specific color that varies depending on the viewing angle, allowing for photorealistic rendering through a high-performance rasterization process.[12, 13] Chinese research institutions, particularly Zhejiang University and the Chinese Academy of Sciences, have been at the forefront of refining this technology for real-time mobile and web deployment.[3, 13]

The Mathematical Efficiency of Splatting

The core advantage of 3DGS lies in its balance of quality and efficient computation. Unlike NeRFs, which require sampling camera rays through a continuous volume, 3DGS explicitly represents each scene as a collection of learnable 3D Gaussians.[14] This allows for the use of differentiable rasterization, which accelerates rendering by avoiding the computationally expensive ray sampling operations.[14] In a standard implementation, Gaussians are visualized as ellipsoids in 3D space, characterized by parameters such as pose, shape, and color, and are "splatted" onto an imaging plane using α-blending to synthesize the final image.[14, 15]

Final Color=

i=1

∑

N

c

i

α

i

j=1

∏

i−1

(1−α

j

)

where c

i

is the color of the i-th Gaussian and α

i

is its opacity. This explicit structure enables real-time rendering speeds of up to 361 FPS on modern hardware, a performance level unreachable by traditional NeRF methods.[16]

Chinese researchers have further enhanced this efficiency through frameworks like ROI-GS, which utilizes a "divide-and-conquer" paradigm to allocate resources based on scene complexity.[17] By focusing fine details on Regions of Interest (ROIs) through object-guided camera selection, ROI-GS improves local rendering quality by up to 2.96 dB PSNR while reducing the overall model size by approximately 17%.[17] This targeted optimization is critical for the "efficiency intensity" model, ensuring that high-resolution 3D environments can be streamed to mobile devices without requiring excessive bandwidth or local compute power.[2, 17]

Fast Gaussian Rasterization Breakthroughs

The development of the "fast_gauss" renderer—a high-performance 3DGS rasterizer based on geometry shaders and global CUDA sorting—represents a major contribution to the ecosystem.[13] This renderer can be 5 to 10 times faster than original CUDA implementations by minimizing repeated work and stalls.[13] Specifically, it computes the 3D-to-2D covariance projection only once per Gaussian rather than four times for a quad, and it uses non_blocking=True data passing to reduce explicit synchronizations between the GPU and CPU.[13] For dense point clouds exceeding one million points, this shader-based architecture maintains high throughput, although standard CUDA implementations may still be preferred for extremely sparse datasets.[13]

Rendering Method

Reconstruction Time (Target)

FPS (Performance)

Architecture

NeRF (Standard)

Hours

<1 FPS

Implicit / Ray-marching

3DGS (Original)

Minutes

100-200 FPS

Explicit / Rasterization

Fast Gaussian Rasterizer

<1 Minute [3]

277-583 FPS [18]

CUDA Sorted / Shader-based [13]

ENeRFi

N/A

5.0 FPS (Mobile) [13]

Real-time Volumetric

4D Dynamics and Temporal Volumetric Video

While 3DGS captures static environments, the transition to 4D Gaussian Splatting (4DGS) is what truly enables the future of interactive entertainment.[4] 4DGS adds the dimension of time, allowing for the capture of complex real-time motion stored efficiently and rendered instantly.[4, 18] This technology is being championed by Chinese startups and labs, such as 4DV.ai and Zhejiang University’s ZJU3DV, who are moving beyond the "flat rectangles" of current video to dynamic objects and scenes that viewers can explore from any angle.[4, 19]

FreeTimeGS and "Anytime Anywhere" Primitives

A notable breakthrough in this field is the FreeTimeGS framework, which introduces "Anytime Anywhere" Gaussian primitives.[13] Unlike previous 4D methods that attempted to map canonical primitives to an observation space using deformation fields—a process that often failed during sudden or abrupt movements—FreeTimeGS allows Gaussians to be free from canonical constraints.[13, 18]

Each primitive in FreeTimeGS is endowed with a specific motion function, allowing it to move to neighboring regions over time, which significantly reduces temporal redundancy.[13] Furthermore, the system employs a temporal opacity function to modulate each Gaussian's visibility relative to the timeline.[13] This provides "strong flexibility" in modeling dynamic 3D scenes and enables the system to outperform recent baselines like STGS and Deform-3DGS by a large margin in terms of rendering quality.[13]

The 4D-Rotor Gaussian Splatting method, another Chinese-led research effort, similarly utilizes anisotropic 4D XYZT Gaussians to model complicated dynamics.[18] By implementing temporal slicing and splatting techniques in a highly optimized CUDA framework, researchers achieved real-time rendering speeds of 277 FPS on an RTX 3090 and 583 FPS on an RTX 4090.[18] These speeds are essential for the future of interactive films, sports replays, and virtual performances where the viewer controls the perspective in real-time.[4, 19]

The Role of 4DV.ai in Content Democratization

The commercialization of 4DGS is being driven by platforms like 4DV.ai, which allow users to upload 2D video (optimally 2K or 4K) and generate a 4D splatting model with color, motion, and sound.[20] The system analyzes spatial and temporal cues to create a model that can be interacted with via a demo player, supporting zoom, rotation, and free movement.[20] While some critics note that the model's fidelity can break down when viewed from extreme angles not captured by the input cameras, the technology’s ability to "calculate what is nearby what" and extrapolate hidden areas represents a major leap in visual media.[20]

This technology is already finding its way into specialized hardware. PICO, the ByteDance-owned XR company, has integrated 4DGS support into its Unreal Engine 5 plugin, allowing for the rendering of animated 4DGS content on the PICO 4 Ultra headset.[21] This enables use cases such as "truly 3D videos" that users can walk through and lifelike animated avatars for XR video calls.[12]

The Corporate Vanguard: Platforms of Mass Creation

China’s dominance is further cemented by its tech giants—ByteDance and Tencent—who have integrated 3D AI and Gaussian Splatting into their massive content ecosystems.[2, 22, 23] These companies are not just developing tools but are building the foundations for a new era of user-generated content (UGC) where complex 3D assets are as easy to create and share as flat images and videos.[24]

ByteDance: Seedance 2.0 and the Consistency Breakthrough

ByteDance’s Seedance 2.0 represents a significant advance in maintaining spatial and character consistency in AI-generated video.[25, 26] Continuity has historically been the "hardest part" of AI video, where preserving identity across angle changes and transitions was nearly impossible.[25] Seedance 2.0 addresses this through a multimodal input system that uses text, images, video, and audio as reference-guided roles.[25]

A creator can upload a product image to define the subject, a motion clip to guide the camera behavior, and an audio file to shape the pacing.[25] The model implicitly understands the consistency of three-dimensional space, correctly replicating background parallax and shadow lengths during smooth camera pans.[27] This allows creators to "replicate by imitation," using high-difficulty movement sequences—such as synchronized mid-air spins in pair figure skating—and applying them to their own characters while strictly following physical laws.[28, 29]

Seedance 2.0 Feature

Creative Utility

Impact on Production

Multimodal Reference

Use images/videos for motion & style

Direction-style control over generation [25]

Character Consistency

Preserve identity across shots

Reliable multi-clip storytelling [25]

Physics-Based Realism

Natural gravity/fluid interaction

Elimination of "AI glitches" [28, 29]

Native Audio Sync

Joint generation of sound and visuals

Savings in post-production time [25, 26]

Tencent: Hunyuan Video and Industrial Integration

Tencent’s Hunyuan Video focuses on professional content creators, offering a high-quality, movie-grade video generation tool that integrates seamlessly with industrial-grade commercial applications.[30] The model is trained on a spatial-temporally compressed latent space using a Causal 3D VAE, which reduces the number of tokens and allows for training videos at original resolution and frame rates.[22]

By utilizing a pre-trained Multimodal Large Language Model (MLLM) as its text encoder, Hunyuan achieves better image-text alignment than traditional T5 encoders.[22] This precision enables the model to emulate human-like accuracy in expressions and movements, making it particularly suitable for advertising and the film industry.[30] In the realm of virtual influencers, Tencent's technology can generate natural lip-syncing across English, Chinese, Japanese, and Korean, facilitating the global distribution of localized 3D avatars.[26, 31]

Startup Ecosystem and the 3D UGC Platform

Beyond the tech giants, a vibrant startup ecosystem is emerging, driven by the goal of making 3D creation accessible to non-professionals.[24] VAST AI, the company behind Tripo AI, is a primary example of this trend.[24, 32]

Tripo AI: Scalable Spatial Computing

Tripo AI has introduced an enterprise-focused framework designed to deliver precise mesh generation and high-resolution material outputs at scale.[32] Utilizing an advanced neural architecture with over 200 billion parameters, Tripo AI enables the rapid transition from 2D concepts to fully topological 3D models in approximately 20 seconds.[32] By 2026, Tripo’s framework has been adopted by over 700 industry clients and is utilized by more than 6.5 million global creators.[32, 33]

The company’s focus is on providing reliable, editable geometry that can be integrated into professional pipelines such as Unreal Engine and Unity.[32, 34] Tripo AI’s automatic skinning and rigging tools, which use AI to intelligently calculate vertex weights and prevent mesh deformation, allow artists to complete the entire 3D pipeline—modeling, texturing, retopology, and rigging—up to 50% faster than manual methods.[34] This is particularly critical for the "spatial computing era," which requires massive amounts of immersive content that manual modeling cannot provide at scale.[32]

Tripo AI Metric

Value (Dec 2025/2026)

Significance

Global Creator Base

6.5 Million [33]

Rapid expansion of 3D UGC

Model Parameters

200 Billion [32]

High geometric accuracy

Generation Speed

~20 Seconds [32]

Real-time asset creation

Annual Revenue (ARR)

$12 Million [24, 35]

Achieving profitability in AI 3D

VAST and the Democratization Philosophy

Simon Song, founder of VAST, identifies the biggest barrier to the metaverse and game development as the high labor intensity of creating art assets.[24] His goal is to use AI to make 3D content as common and easy to create as images and videos.[24] VAST’s open-source TripoSR model, developed with Stability AI, can achieve 3D generation in under 0.5 seconds on an NVIDIA A100, demonstrating the potential for near-instant 3D creation.[35] This "democratization" of 3D creation is a key component of China’s strategy to corner the future entertainment market by ensuring that the vast majority of virtual world content is generated using Chinese technology.[24, 33]

Economic Logic: Efficiency Intensity vs. Capital Intensity

A prominent insight from the current rivalry is that the U.S. and China are operating on fundamentally different economic philosophies regarding AI development.[2] U.S. companies are largely following a path of "capital intensity," where the competitive edge is derived from the sheer scale of investment in compute and data.[2, 36] This approach, however, has led to skyrocketing investment costs for the entire industry, primarily due to the high prices of NVIDIA GPUs.[2]

The Asset Devaluation Risk

Chinese industry analysis highlights a critical problem with the U.S. model: if market competition leads to lower-priced, better-performing GPUs in the future, the massive investments currently being made by U.S. giants will result in significant asset devaluation.[2] Furthermore, the lack of a closed-loop business model for many of these large-scale investments creates an "inefficiency bomb" that could explode if low-cost Chinese alternatives reach the global market.[2]

China's "efficiency intensity" model addresses this by focusing on optimization at the foundational level to avoid redundant construction.[2] Government investment in AI is significant but targeted toward solving chip and data center computing power issues collectively rather than through individual company-led sprawl.[2] This coordinated approach ensures that even with limited computing power—a result of U.S. suppression—China remains "not bottlenecked" and is continuously producing results that are more refined and less wasteful.[2]

Diffusion as a National Capability

The race for dominance is not just about invention but about "diffusion"—the speed at which a technology moves from experimental use to field adoption.[36] China’s military-civil fusion (MCF) model allows for the rapid routing of commercial techniques into military and industrial applications at scale.[36] For the U.S., bureaucratic friction and data hoarding have historically slowed the adoption of AI, even when the underlying technology is advanced.[36] China’s momentum is dangerous because it is "built for diffusion," pushing capabilities into the force and the market through doctrine, procurement, and mobilization.[36]

Governmental Scaffolding: Standards and Sovereignty

The Chinese government plays a proactive role in setting the standards that will govern future 3D and spatial technologies.[5, 37] By establishing national and sector standards, China aims to ensure digital sovereignty and exert influence over international technical bodies.[6, 38]

The 2024 AI Standardization Guidelines

In July 2024, the Ministry of Industry and Information Technology (MIIT) and other agencies issued the "Guidelines for the Construction of a Comprehensive Standardization System for the National Artificial Intelligence Industry".[37, 39] These guidelines aim to build a system covering the whole life cycle of the AI industry, with a focus on "enabling new industrialization".[39] By 2026, China plans to have established more than 50 new national and sector standards, with participation from over 1,000 enterprises.[39]

Key technologies targeted for standardization include:

Embodied AI: Standards for AI that interacts with the physical world, critical for robotics and XR.[39]

Cross-media Intelligence: Standards for intelligence across different media types, including 3D and video.[37]

Spatio-temporal Data Sensing: Requirements for security in intelligent and connected vehicles and spatial environments.[39]

Standardization Goal (by 2026)

Target Metric

New National/Sector Standards

> 50 [39]

Participating Enterprises

> 1,000 [39]

International Standards Engagement

> 20 [39]

Humanoid Robot Mass Production

> 140 Manufacturers [40]

National AI Innovation and Development Pilot Zones

The Chinese government has also established roughly 20 "National AI Pilot Zones" to conduct technology demonstrations, policy tests, and social experiments.[41] These zones are located in cities with a core AI industry exceeding 5 billion yuan and focus on the deep integration of AI with local economic and social conditions.[41] They serve as testing grounds for data openness, intellectual property protection, and security management, providing replicable experiences that can be scaled across the country.[41] By organizing long-term social experiments, the government seeks to record and assess the comprehensive impact of AI on society, ensuring that technological progress aligns with national stability and governance goals.[6, 41]

Hardware Integration and the Bridge to Reality

The convergence of China’s 3D AI and its advanced manufacturing sector has led to the development of hardware that acts as the primary interface for future entertainment.[8, 42] Chinese startups are "shrinking headsets into glasses" and reimagining how the physical and virtual worlds merge.[42]

PICO and the Spatial Application Paradigm

ByteDance’s PICO is central to this hardware-software synergy.[23, 43] PICO OS 6 introduces a new 3D interaction paradigm for spatial applications, supporting hand gestures, eye tracking, and gaze coordination.[23] PICO has also launched the XRoboToolkit, a cross-platform XR robotic teleoperation framework, which demonstrates the "phygital" bridge between XR devices and embodied intelligence.[21, 23]

The PICO 4 Ultra features first-party support for 3D Gaussian Splatting, allowing developers to load and render high-fidelity static and dynamic 3DGS content.[12] By open-sourcing the "PICO Splat" plugin for Unreal Engine 5, ByteDance is enabling developers worldwide to experiment with Gaussian Splatting in their projects, further entrenching PICO hardware as the standard for 3D AI rendering.[21, 43]

The Domestic Semiconductor Strategy

To maintain independence from U.S. technological constraints, China is focusing on domestic System-on-Chip (SoC) development for AR/VR.[6, 44] While companies like Qualcomm dominate the high-end SoC market with chips like the Snapdragon XR2+ Gen 2 (supporting 4.3K resolution per eye), Chinese players like Rockchip and Hisilicon are developing competitive alternatives.[44, 45, 46]

The Rockchip RK3588, for instance, features a 6 TOPS NPU optimized for INT8 operations, achieving 244 FPS for ResNet18-class models.[47] While currently trailing Qualcomm in raw GPU rendering for high-resolution VR, its performance-per-watt (49 FPS per Watt vs. 1.25 for a desktop GPU) makes it highly suitable for lightweight AR glasses and edge AI applications.[47]

Hardware Component

Chinese Player

International Comparison

XR SoC

Rockchip RK3588 [47]

Snapdragon XR2 Gen 2 [45]

AR Glasses

RayNeo (35.4% Share) [48]

Meta (Ray-Ban Meta) [49]

VR Headsets

PICO 4 Ultra [12]

Meta Quest 3 [50]

Optical Solutions

Sunny Optical [51]

ams-Osram [51]

The 2026 Smart Glasses Breakout

By 2026, smart glasses are expected to become as routine as smartphones in China.[49] The sales volume is forecasted to exceed 3.2 million units, driven by breakthroughs in Micro-LED displays and high-density batteries.[48, 49] For the first time, smart glasses have been included in the scope of Chinese national subsidies, reflecting the government's high regard for the category.[48]

New entries from Huawei and Xiaomi are particularly significant. Huawei’s 2026 AI glasses, powered by HarmonyOS, offer real-time translation, simultaneous interpretation, and cross-platform collaboration.[52, 53] Xiaomi’s Mijia Smart Audio Glasses prioritize utility and aesthetics, normalizing smart eyewear as a functional accessory rather than a tech experiment.[49] Huawei’s release of camera-equipped AI glasses in early 2026 allows the company to capture local demand in the absence of Meta’s retail presence in China.[54]

Societal and Industrial Infusion

The impact of these technologies extends beyond gaming into virtual tourism, cultural heritage, and industrial training, creating a "comprehensive empowerment" of the digital economy.[1, 37]

Digital Heritage and Living Documentation

One of the most profound applications of 3DGS in China is the preservation of intangible cultural heritage (ICH).[55] A "living documentation" paradigm integrates Kunqu opera performances with Suzhou classical gardens using multi-camera arrays and Gaussian Splatting.[55] This approach creates an "Integrated Digital Theater" that enables photorealistic, site-specific reenactments.[55] Unlike traditional motion capture that neglects the environment, 3DGS preserves the spatiality and realism of the performance within its historical context, enhancing cultural understanding and identification.[55]

Virtual Tourism and Digital Twins

China is also leveraging digital twin technology for museums and cultural sites.[17] ROI-GS is used for efficiently reconstructing objects within large-scale scenes with high detail.[17] The "MatrixCity" dataset, developed by the Chinese University of Hong Kong and the Shanghai AI Laboratory, simulates urban environments through oblique-photography configurations, providing a blueprint for city-scale digital twins.[56] These twins are used not only for tourism and education but also for emergency rescue and disaster response, where real-time, high-fidelity 3D maps are essential.[56]

The 2026 Space-Based Computing Constellation

In a forward-looking move, China has launched a feasibility study for a space-based intelligent computing constellation.[57] This constellation would deploy computational capacity in space, enabling seamless global coverage and orbital intelligent processing.[57] By slashing data transmission latency from hours to seconds, space-based computing will support scenarios such as disaster early warning, marine observation, and polar expeditions.[57] This strategic "outer-space" data center initiative aims to break ground computing bottlenecks and serve national strategies for a global ubiquitous computing network.[57]

Intellectual Property and the Global Standards War

China leads the world in AI patent filings, accounting for over 70% of global applications.[10] However, the quality and international scope of these patents remain points of intense debate.[58, 59]

Patent Quantitative Dominance

Between 2014 and 2023, more than 38,000 generative AI inventions originated from China, six times more than second-place United States.[60] Tencent, Ping An Insurance, and Baidu are the top patent applicants globally.[60] Despite this numerical lead, many Chinese patents are only protected within China, with only 7.3% of filings pursued abroad.[59] This suggests that while China is aggressively building a domestic IP fortress, its influence in overseas patent enforcement is still maturing.[10, 59]

Organization

GenAI Inventions (2014-2023)

Country

Tencent

2,074 [60]

China

Ping An Insurance

1,564 [60]

China

Baidu

1,234 [60]

China

IBM

601 [60]

USA

Alibaba Group

571 [60]

China

Samsung Electronics

468 [60]

South Korea

Alphabet

443 [60]

USA

ByteDance

418 [60]

China

International Standards Proposals

China is increasingly active in setting international standards for 3D data. The Audio Video coding Standard (AVS) Workgroup has completed the first generation of its point cloud compression standard, AVS PCC, which adopts coding tools different from the MPEG counterparts.[61, 62] Chinese contributions to MPEG AI-3DGC and JPEG Point Cloud standards include technologies such as GRASP-Net and SparsePCGC for geometry and attribute compression.[62]

Furthermore, Chinese researchers are investigating 3D steganography frameworks to protect the copyright of 3DGS assets.[63] The "Splats in Splats" framework embeds hidden content in Gaussian primitives' spherical harmonics (SH) coefficients, ensuring security and user experience without modifying rendering attributes.[63] These efforts reflect China's intent to lead not only in content creation but in the legal and technical governance of the 3D ecosystem.[63]

Strategic Conclusions

The evidence indicates that China is successfully cornering the market on future entertainment technologies through a three-pronged strategy of algorithmic efficiency, rapid commercialization, and state-led standardization. By prioritizing 3D and 4D Gaussian Splatting, Chinese researchers have addressed the rendering bottlenecks that previously limited the adoption of immersive media on consumer devices. The "efficiency intensity" model has proven to be a resilient alternative to the U.S. capital-intensive approach, allowing China to maintain a competitive edge despite constraints on high-end semiconductor access.

Corporate giants like ByteDance and Tencent have effectively integrated these 3D AI capabilities into mass-market platforms, while startups like VAST and 4DV.ai are democratizing the creation of the 3D assets required for the spatial computing era. The Chinese government’s proactive role in setting standards and establishing pilot zones provides a scaffolding for this growth, ensuring that technological progress serves national economic and security interests.

As we move into 2026, the breakout of smart glasses and the emergence of space-based computing signal the next phase of China's dominance. The "Phygital" world is no longer a futuristic concept but a burgeoning market where the tools of interaction, the standards of data, and the hardware of consumption are increasingly defined by Chinese innovation. The 2030 vision of China as the global AI primary innovation center appears not only plausible but, given current trajectories in 3D AI and Gaussian Splatting, highly probable.

--------------------------------------------------------------------------------

Edge IoT Industrial Immersive and Spatial Computing Applications - AIOTI, https://aioti.eu/wp-content/uploads/AIOTI-Paper-Edge-AI-IoT-Immersive-Applications-Final.pdf

Chinese companies release leading AI video models, the compe | 风云学会陈经 on Binance Square, https://www.binance.com/en-IN/square/post/289999450161090

3D Gaussian Splatting Challenge | SIGGRAPH Asia 2025 Workshop, https://gaplab.cuhk.edu.cn/projects/gsRaceSIGA2025/

4D Gaussian Splatting: China's Mind-Blowing AI Turns 2D Video into 4D Reality - YouTube, https://www.youtube.com/watch?v=9g3_Q-rJEag

Blueprint to Action: China's Path to AI-Powered Industry ..., https://reports.weforum.org/docs/WEF_Blueprint_to_Action_Chinas_Path_to_AI-Powered_Industry_Transformation_2025.pdf

China's ambitions in Artificial Intelligence - European Parliament, https://www.europarl.europa.eu/RegData/etudes/ATAG/2021/696206/EPRS_ATA(2021)696206_EN.pdf

Department of International Cooperation Ministry of Science and Technology(MOST), P.R.China, https://fi.china-embassy.gov.cn/eng/kxjs/201710/P020210628714286134479.pdf

Growth a big reality for AR, VR domestic market - Chinadaily.com.cn, https://global.chinadaily.com.cn/a/202301/11/WS63bdeee6a31057c47eba8d4d.html

AI Industry Landscape Report 2025 - China Europe International Business School, https://repository.ceibs.edu/files/59116885/AI_Industry_landscape_report_2025.pdf

Who's Winning the AI Patent Race? A Data-Driven Look at AI IP Growth | PatentPC, https://patentpc.com/blog/whos-winning-the-ai-patent-race-a-data-driven-look-at-ai-ip-growth

Why 3D Gaussian Splatting is the Biggest Leap in Filmmaking Since the Digital Camera, https://medium.com/@nmy7331/why-3d-gaussian-splatting-is-the-biggest-leap-in-filmmaking-since-the-digital-camera-09e714d3f3bc

Unlocking Next-Gen Rendering: 3D Gaussian Splatting on PICO 4 Ultra, https://developer.picoxr.com/blog/3dgs-pico4ultra/

FreeTimeGS - GitHub Pages, https://zju3dv.github.io/freetimegs/

(PDF) ProGS: Towards Progressive Coding for 3D Gaussian Splatting - ResearchGate, https://www.researchgate.net/publication/401772297_ProGS_Towards_Progressive_Coding_for_3D_Gaussian_Splatting

HPC: Hierarchical Point-based Latent Representation for Streaming Dynamic Gaussian Splatting Compression - ResearchGate, https://www.researchgate.net/publication/400370606_HPC_Hierarchical_Point-based_Latent_Representation_for_Streaming_Dynamic_Gaussian_Splatting_Compression

Human reconstruction using 3D Gaussian Splatting: a brief survey - Frontiers, https://www.frontiersin.org/journals/artificial-intelligence/articles/10.3389/frai.2025.1709229/full

A study of digital exhibition visual design led by digital twin and VR technology, https://www.researchgate.net/publication/376525563_A_study_of_digital_exhibition_visual_design_led_by_digital_twin_and_VR_technology

4D-Rotor Gaussian Splatting: Towards Efficient Novel View Synthesis for Dynamic Scenes, https://research.nvidia.com/publication/2024-02_4d-rotor-gaussian-splatting-towards-efficient-novel-view-synthesis-dynamic

4DV AI: The Future of 360° Videos - Biunivoca, https://www.biunivoca.com/en/blog/4dv-ai-the-future-of-3600-videos

China's 4DV AI just dropped 4D Gaussian Splatting, you can turn 2D video into 4D with sound.. : r/OneAI - Reddit, https://www.reddit.com/r/OneAI/comments/1l653hy/chinas_4dv_ai_just_dropped_4d_gaussian_splatting/

PICO Presents Innovative Developer Tools at HarvardXR: Empowering Robotics, AI, 3DGS, and Web Developers, https://developer.picoxr.com/blog/harvardxr2025/

HunyuanVideo: A Systematic Framework For Large Video Generation Model - GitHub, https://github.com/Tencent-Hunyuan/HunyuanVideo

Blog | PICO Developer, https://developer.picoxr.com/blog/

VAST (Tripo AI) · Simon Song - Founderoo, https://www.founderoo.co/posts/vast-tripo-ai-simon-song

Seedance 2.0 Overview: ByteDance's New Video Model for Creators - Invideo AI, https://invideo.io/blog/seedance-2-0-overview/

Seedance 2.0 AI Video Model on Artlist, https://artlist.io/blog/new-seedance-2/

From Frames to Worlds: A Comparative Study of Spatial Intelligence in Multimodal Video Generation… - Medium, https://medium.com/@gwrx2005/from-frames-to-worlds-a-comparative-study-of-spatial-intelligence-in-multimodal-video-generation-547e323dece0

Seedance 2.0 Official Launch - ByteDance Seed, https://seed.bytedance.com/en/blog/official-launch-of-seedance-2-0

Seedance 2.0 Complete Guide: Multimodal Video Creation | WaveSpeedAI Blog, https://wavespeed.ai/blog/posts/seedance-2-0-complete-guide-multimodal-video-creation/

Hunyuan Video - AI Tool For Videos - There's An AI For That, https://theresanaiforthat.com/ai/hunyan-video/

Top 10 ComfyUI Workflows for AI Video (Free Downloads) - ThinkDiffusion, https://learn.thinkdiffusion.com/top-10-comfyui-workflows-for-ai-text-to-video-and-video-to-video-2025/

Tripo AI Announces Enterprise-Grade AI 3D Model Generator Expansion for US Spatial Computing Market - MID-CO Commodities, https://www.mid-co.com/markets/stocks.php?article=accwirecq-2026-2-22-tripo-ai-announces-enterprise-grade-ai-3d-model-generator-expansion-for-us-spatial-computing-market

Tripo Studio 1.0 Launch: AI 3D Creation Speed Doubles, User Base Soars - IndexBox, https://www.indexbox.io/blog/tripo-ai-launches-tripo-studio-10-user-base-doubles-to-65-million/

The Best Automatic Skinning 3D Model AI Converter of 2026 - Tripo AI, https://www.tripo3d.ai/content/en/guide/the-best-automatic-skinning-3d-model-tools

The use of Large Language Models in Generating Real-Life Models and Objects, https://www.informacnigramotnost.cz/ostatni/the-use-of-large-language-models-in-generating-real-life-models-and-objects/

The US AI Acceleration Plan vs China's Diffusion Model - Foreign Policy Research Institute, https://www.fpri.org/article/2026/01/the-us-ai-acceleration-plan-vs-chinas-diffusion-model/

Guidelines for the Construction of a Comprehensive Standardization ..., https://cset.georgetown.edu/publication/china-ai-standardization-guidelines-2024/

Eyeing China's Growth, NIST Launches New Standards Initiative for AI Agents - FDD, https://www.fdd.org/analysis/2026/02/20/eyeing-chinas-growth-nist-launches-new-standards-initiative-for-ai-agents/

China Issues the Revised AI Standard System - SESEC, https://sesec.eu/china-issues-the-revised-ai-standard-system/

China releases national standard system for humanoid robotics and embodied AI, http://english.scio.gov.cn/chinavoices/2026-03/02/content_118354738.html

Guidelines for National New Generation Artificial Intelligence Innovation and Development Pilot Zone Construction Work | Center for Security and Emerging Technology, https://cset.georgetown.edu/publication/guidelines-for-national-new-generation-artificial-intelligence-innovation-and-development-pilot-zone-construction-work/

Chinese Startup AR Glasses Transform Everyday Reality Into a ..., https://inairspace.com/blogs/learn-with-inair/chinese-startup-ar-glasses-transform-everyday-reality-into-a-digital-playground

Pico XR - Radiance Fields, https://radiancefields.com/platforms/pico-xr

Analysis of the AR/VR Value Chain in China: Is China at the forefront of the industry?, https://counterpointresearch.com/en/insights/analysis-of-the-arvr-value-chain-in-china-is-china-at-the-forefront-of-the-industry

Qualcomm's Snapdragon XR2+ Gen 2 Boosts Performance for High-Resolution AR/MR, On-Device AI and ML - Hackster.io, https://www.hackster.io/news/qualcomm-s-snapdragon-xr2-gen-2-boosts-performance-for-high-resolution-ar-mr-on-device-ai-and-ml-d26cb67556ee

Qualcomm's New Top-Tier Chip Brings 4K to XR Headsets | PCMag, https://www.pcmag.com/news/qualcomms-new-top-tier-chip-brings-4k-to-xr-headsets

Rockchip RK3588 NPU Deep Dive: Real-World AI Performance Across Multiple Platforms, https://tinycomputers.io/posts/rockchip-rk3588-npu-benchmarks.html

Leading Smartglasses Companies in the Chinese Market : r/augmentedreality - Reddit, https://www.reddit.com/r/augmentedreality/comments/1rpo036/leading_smartglasses_companies_in_the_chinese/

The 2026 Smart Glasses Surge: How AI and AR Eyewear Are Replacing the Smartphone, https://www.intelligentliving.co/2026-smart-glasses-ai-ar-eyewear/

Qualcomm's new XR chipset is faster than Quest 3's - MIXED Reality News, https://mixed-news.com/en/snapdragon-xr2-plus-gen-2-specs/

Twelve New Member Companies join the AR Alliance as Strategic Growth Continues, https://www.businesswire.com/news/home/20260327608466/en/Twelve-New-Member-Companies-join-the-AR-Alliance-as-Strategic-Growth-Continues

New Huawei AI glasses with translation tool could be on the way - Notebookcheck, https://www.notebookcheck.net/New-Huawei-AI-glasses-with-translation-tool-could-be-on-the-way.1208405.0.html

New Huawei Eyewear could debut in H1 2026 with smarter AI features, https://www.huaweicentral.com/new-huawei-eyewear-could-debut-in-h1-2026-with-smarter-ai-features/

Huawei Confirms Camera-Equipped AI Glasses Launch | Let's Data Science, https://letsdatascience.com/news/huawei-confirms-camera-equipped-ai-glasses-launch-4b98b540

Digital heritage integration of Kunqu opera and Suzhou classical gardens - ResearchGate, https://www.researchgate.net/publication/400305094_Digital_heritage_integration_of_Kunqu_opera_and_Suzhou_classical_gardens

A Large-Scale 3D Gaussian Reconstruction Method for Optimized ..., https://www.mdpi.com/2072-4292/17/23/3868

China Focus: China starts feasibility study for space-based intelligent computing constellation - Xinhua, https://english.news.cn/20260405/513b991c3d2e45628931f3ba6908600e/c.html

Is China's Patent Boom a Bust? | Published in Houston Law Review, https://houstonlawreview.org/article/154481-is-china-s-patent-boom-a-bust

China Leads on Generative AI Patents, but What Does that Mean?, https://www.cigionline.org/articles/china-leads-on-generative-ai-patents-but-what-does-that-mean/

China-Based Inventors Filing Most GenAI Patents, WIPO Data Shows, https://www.wipo.int/pressroom/en/articles/2024/article_0009.html

[2602.08613] Overview and Comparison of AVS Point Cloud Compression Standard - arXiv, https://arxiv.org/abs/2602.08613

AI-Based 3D Point Cloud Coding Standards - Springer Professional, https://www.springerprofessional.de/en/ai-based-3d-point-cloud-coding-standards/52255898

Splats in Splats: Robust and Effective 3D Steganography Towards Gaussian Splatting | Proceedings of the AAAI Conference on Artificial Intelligence, https://ojs.aaai.org/index.php/AAAI/article/view/42447